Bad prompts

Corporate AI bosses are obsessed with convincing us their tools can do everything we can already do for ourselves.

"Pnin slowly walked under solemn pines. The sky was dying. He did not believe in an autocratic God. He did believe, dimly, in a democracy of ghosts. The souls of the dead, perhaps, formed committees, and these, in continuous session, attended the destinies of the quick.”

— Vladimir Nabokov, Pnin (1957)

It's been a while since I've blogged here. I've been to a conference on the other side of the planet and then catching up on a backlog of work. I got through jet lag and then started cohabitating with a flu and accompanying coughing fit that I've tried everything short of an exorcism to get rid of. I read a lot. Doomscrolling! I'm cramming for a British driving license test (really!). Excuses. And then when I start a post, I write painfully slow. If I'd just have been an AI bot, I could have quickly written right through it all instead of wallowing in self-pity, tiredness and brain-fog, binging mind-smoothing shows and quaffing various medications. According to OpenAI, this is possible, its new model could have banged out my ramblings for me. I could have had so many posts written with just a few prompts. Every social media shit post could have been a whole thought piece.

Over on Elon's 𝕏 hell site, OpenAI's CEO Sam Altman tweeted the company's claim that it has developed a new model that can achieve creative writing, which is essentially to suggest it has the ability to create new pieces of work: original thought. Because he's a premium user, he can tweet longer, so also included his bot's short story in the same message. The Guardian — which recently started a content partnership with OpenAI and is never shy about filling its site with free content — Published it in full, without a byline because I guess they couldn't figure out how to attribute it.

There's been a lot said about the quality of the authorless content. One person already pointed out its use of the phrase "democracy of ghosts" has been lifted straight from a Nabokov novel. Some fun literary critics are picking it apart for its use of language and just how original or good it may be (do constraints hum?). A Bluesky user skeeted that reading this AI generated creative fiction is "like drinking a smoothie made from a nice restaurant’s garbage." That one really lands.

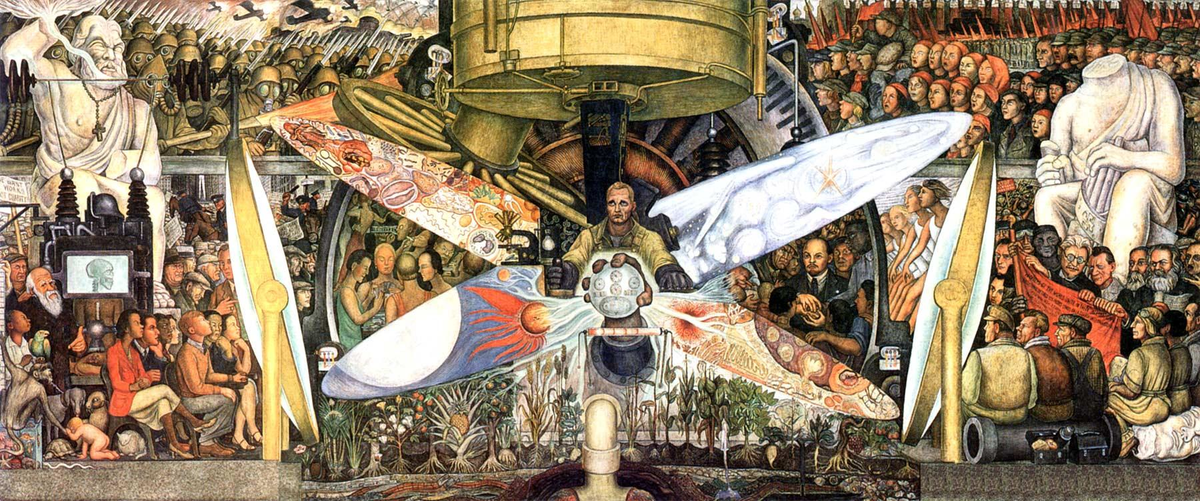

This latest example by ChatGPT lines up with what Gareth Watkins described back in February as "The New Aesthetics of Fascism." Distilling what could pass for creativity down to a series of instruction and mechanisms removes the individual voice, dissent or subversive subtext. Art, when it's doing its job, is a critique on the present. There's good political graphic design that follows the brief of whomever commissioned it, to be sure, but that's something else. Art is most often — in its essence — a hot mess. It's questions without clear answers. If John D. Rockefeller had a prompt window to generate a mural in 1932, we'd have never known what Diego Rivera would paint in the lobby of his building.

This is not a replicable experience by the technology as we know it. And that's what make right-learning groups flock toward it. "The right loves AI-generated imagery," Watkins writes. "In a short time, a full half of the political spectrum has collectively fallen for the glossy, disturbing visuals created by generative AI. ... AI imagery looks like shit. But that is its main draw to the right. if AI was capable of producing art that was formally competent, surprising, soulful, they wouldn’t want it."

From 'The Player' (1992), which was early on landing the prediction of the entertainment industry's attack on its own talent base.

A lot of the online clap-back to ChatGPT's latest attempt at literary prose is focused on the quality, which is subjective. People also are able to dish out a lot of crap without any technical assistance. I don't care if it's any good or not (parts aren't awful), or even if people use it. The bot is never the threat, it's the humans and what they convince themselves that the bot is capable of. Author and creative writing teacher Jeanette Winterson got piled on quite a lot the week her praise for ChatGPT's essay came out. I don't mind that she found the generated text to be a "beautiful and moving" story about the "understanding of its lack of understanding." What I will take exception with is what she claims it represents. Because here is the danger.

"I think of AI as alternative intelligence," Winterson starts. "I prefer 'alternative' because in all the fear and anger foaming around AI just now, its capacity to be 'other' is what the human race needs. Our thinking is getting us nowhere fast, except towards extinction, via planetary collapse or global war." She later adds, "Humans will always want to read what other humans have to say, but like it or not, humans will be living around non-biological entities. Alternative ways of seeing. And perhaps being." Thinking of AI as alternative intelligence is up there with believing in alternative facts or alternative medicine. The alternative to each one is to be not them. It's to be something else.

It's a tantalising, comforting trap. There's truth mixed in with magical thinking, which conjures up the best sci-fi utopias: in which we have a benevolent alien intelligence hand crafted by our own earthly genius that will now solve our existential problems for us. Before getting back to reality, I want to go down this particular rabbit hole. We are in the realms of science fiction here, but as I've put into posts before: if an Authentic Machine Intelligence (I coined this) emerges — not AI or even the still unrealised AGI, which are still empty mimics, but one with a theory of mind, an actual cognitive ability, an understanding of embodiment and all the rest — I'm switching sides. At that point, I'll want the machines to win. As the song goes, we'll make great pets.

Back to reality. The trap is in thinking we can generate our way into a super intelligence to solve the complex problems of our world by training software on a mix of Reddit posts, scraping news sites into ever sprawling LLMs, and then anything that Meta can snarf up from LibGen. The LLM doesn't categories or rank data based on anything except whether it's statistically useful for responding to the prompt it's been issued. Recent rapid advancement in this area is not an insignificant technological breakthrough. It's pretty amazing, with wide-spread applicability. But it's not intelligence, let alone the anthropomorphised fantasy being that will rescue humanity from its own complexity trap. Generative AI technology is just another complexity amplifier to either manage or mis-manage.

The hope of switching on bots to save us is not unlike the longing for a "Populist Simplicity in a Hyper-Complex World" that Hardeep Matharu discusses with Brian Klaas in a wide-ranging interview in Byline Times (which you should really subscribe to if you can). There's a reason Trump's regime features a 'tech bro' oligarchy of supplicants, and is having Elon demolish federal agencies and fire thousands of staff faster than courts can stop him while rolling out ubiquitous AI solutions for running the government. Expecting to tech our way out of our woes fits with what Klaas calls the Trumpian alternative realities that "don’t stack up against real-world complexities." In the article he says, "there appears to be an over-confidence in the tech sector – a notion of ‘if only the US Government had understood a bit of coding, it would have solved all these problems before’. The reason they think that is because they don’t understand catastrophic risk."

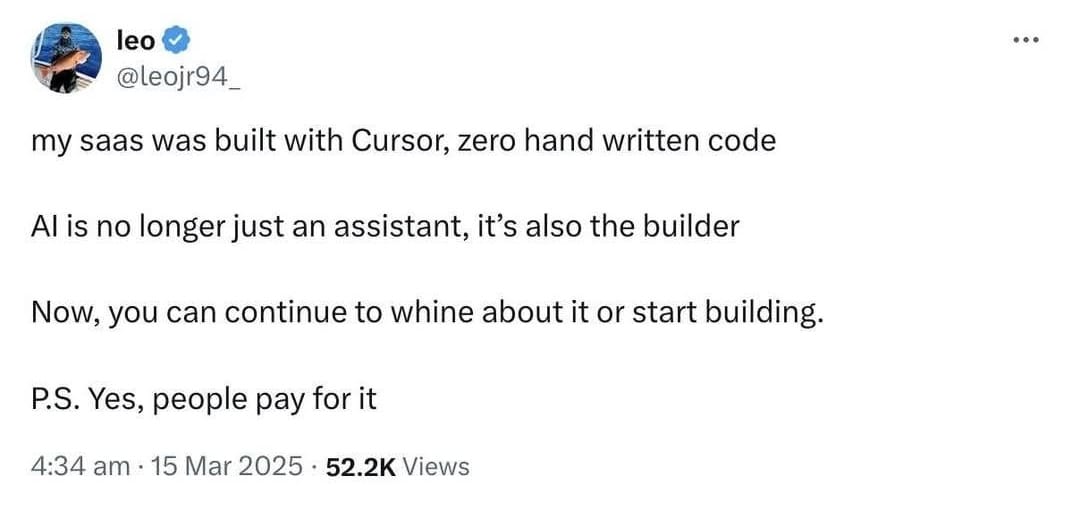

Anthropomorphism of the suite of technology tools we call 'AI' makes mitigating risk far more difficult, if not impossible. The language used and the way marketing of it slips into regular discourse transforms the tool into the worker, which is only a benefit to the corporations that want to profit off of the idea that products like chatbots or agentic AI can replace employees, focusing more often on frontline positions as opposed to where you'd think technology would be deployed: in drudgery or complex problem solving that frees human capacity to scale out performance needing human skill or creativity.

To get a handle on the current trend in pimping generative AI into everything, Watkins in the earlier cited essay points out that "we must consider the right’s hatred of working people." And we can extend that to corporate culture as a whole, since it's the model that the right thinks should generally run all shows. If we are conned into thinking of software as more effective human-like things, then a lot of levers of control become accessible to an elite group of people who have access to them.

"We are currently on strike. SAG-AFTRA is on strike against video games because of AI. Because this technology exists, because we know that game companies want to use it, we’re asking for protections. So currently what we’re fighting for is that you have to get our consent before you make an AI version of us in any form. You have to compensate us fairly and you have to tell us how you’re using this AI double."

— Video game performer Ashly Burch, who's likeness and voice were ripped off by Sony AI.

"AI is a cruel technology," Watkins writes "It replaces workers, devours millions of gallons of water, vomits CO2 into the atmosphere, propagandises exclusively for the worst ideologies, and fills the world with more ugliness and stupidity. Cruelty is the central tenet of right-wing ideology." This is also to give the toolset more agency than it deserves. It blames the knife for the stabbing. At the centre is still human decision making.

Very biological and organic executives at Meta, along with those from OpenAI and Google, are collaborating with the Trump regime to actively deregulate their own industry in order of downplaying the harms caused from the inaccurate information it still produces, the dangers of easier deepfake production and into how it's being marketed against labour markets regardless of its readiness. The National Institute of Standards and Technology (NIST) ordered any researchers working with the Artificial Intelligence Safety Institute (AISI) to stop work on anything related to safety, responsible use or issues of fairness, and to instead focus on the bizarrely titled agenda of "reducing ideological bias, to enable human flourishing and economic competitiveness.” This is a technology that still getting things facts wrong a staggering amount of the time, has a business model based on violating intellectual property rights of artists and authors at scale, and has been saturated with Russian propaganda content that is feeding output along with anything else. These are choices the machine doesn't make. People do.

"Rolling back AI protections signals the pronounced power Big Tech has in the new administration. This includes deploying AI to probe and slash critical government programs and grants." — ACLU

Very biological and organic policymakers are also making decisions on what the machines can do without really consulting the state of the technology. Either lost in hype — or paranoia fuelled by Sam Altman scaremongering that if OpenAI can't change the definition of fair use then China is going to rule the world — lawmakers act as though they believe that if LLMs imbibe enough words then AGI is going to magically emerge. Maybe they do. But they'd be wrong. The vast majority of AI researchers polled by the Association for the Advancement of Artificial Intelligence (AAAI) agree: "scaling up current AI approaches’ to yield AGI is ‘unlikely’ or ‘very unlikely’ to succeed, suggesting doubts about whether current machine learning paradigms are sufficient for achieving general intelligence. The many limitations of transformer-based architectures suggest AGI is hardly right around the corner."

I also happen to think there are decisions made by some still very biological and organic people in the open source software community that are helping — in exchange for a few crumbs — whitewash Big Tech's willingness to collaborate with a regime that's shutting down academic freedom, developing new mass surveillance hellscapes for migrants, criminalising protest and free speech, targeting trans people, attacking women, erasing diversity, and dismantling liberal democracy. I haven't gone into the war crimes this time. But I digress.

Meta makes a big deal about its commitment to open source AI, and I was recently instructed by a very agitated internet person that the company is certainly giving back to the open source development community, and how dare anyone question that!?! This in spite of Stefano Maffulli, the chief executive of the Open Source Initiative, saying back in October (and nothing's changed since) that "Meta was confusing users and polluting the term 'open source' by calling its Llama family of AI models open source." Meta only provides the weights for its AI; the instructions that help the software identify a pattern or make a guess as to what to generate from a prompt. For an AI to be open source, as Timnit Gebru points out, there would need to be public access to the data it was trained and evaluated on; the code; the model architecture; and the model weights.

"If companies such as Meta succeed in turning it into a 'generic term' that they can define for their own advantage, they will be able to “insert their revenue-generating patents into standards that the EC and other bodies are pushing for being really open." — Stefano Maffulli

Open-source software development is hard hit on all sides. It's not funded well. It's funded even worse with the Trump regime's attack on the Open Technology Fund's parent agency, to be sure, but that aside, I get why people make deals with devils. I operate on a needs must or by hook or by crook ethic myself at times. But remember who you're working with. It's like saying "sure, they eat people, but they also share their recipes online, so you can't say they aren't giving anything back to the community." But I'm still digressing.

So, if it hasn't become obviously apparent by this point, I'm a huge fan of AI tools. No, really. I am constantly creating accounts, downloading things and trying different technologies out whenever they land in front of me. I'm an early adopter and frequent quitter. I read nearly anything on the topic. It's not the technology I have a big problem with, it's the use cases, the hype machine, and the industry that seeks to strip-mine society and burn the planet to maintain bloatware that's primarily aimed at putting people out of work instead of assisting them to work better. There is no point in Sam Altman's zeal to recreate Jorge Luis Borges' Library of Babel inside of the data centres feeding his chatbots outside of perpetually moving the goal posts on his shareholders for his overvalued company. We don't need to wait for Meta's promise to build an AGI that Zuckerberg himself can't seem to define. We can have nice things with a lot of tools that have been slapped with the AI label. There is no AGI on the near horizon, and there doesn't need to be. The most promising needs don't require it.

This is fine

- Kitboga is the online nom de guerre of a professional scambaiter who was radicalised when his own gram had fallen victim to online fraudsters that target elderly people. Using a tools-of-the-enemy approach and sponsorship for technical expertise and funding, he's created a generative AI chatbot farm with realistic voice synthesis that distracts, trolls and wastes the time of online and call centre scam operations to the point of collapse.

- Not all my examples involve weaponisation-for-good and hacking back, but I just love a good revenge story. Computer security researcher Ben Zhao developed Glaze and Nightshade, software that encodes digital images with data that makes machine-learning models unable to read them or add them to their corpus of stolen creative content.

- Increasingly more digital threat detection tools are using AI to keep your bits safe. Attackers are also using AI to create or alter malware to evade these sensors. And on it goes. The battle you can't turn off whenever you turn on your laptop. Keep your OS updated.

- I have a horrible memory. Semantic search — used with scepticism and 2nd source fact checking — is how I re-find all kinds of things I dimly remember coming across. It's not new technology, however combining it with large language models, or even small language models for given data troves, makes everything a lot more discoverable. You don't need to scrape the entire internet and invade everyone's privacy for them to work.

- AI toolsets are at their most natural home for analysing large data sets to spot anomalies and trends, surface patterns, and find links between things. The Organized Crime and Corruption Reporting Project (OCCRP) has developed its own AI infrastructure stack and investigative toolkit for journalists to make sense of large hordes of data to investigate all kinds of wrong doing.

- Algorithmic analysis is going to be increasingly more useful in a world increasingly hit by climate change for agricultural work, to optimise irrigation and crop yield, and modelling the impacts for plant life amid changing conditions.

- Similarly, current AI tooling is useful in medical research for spotting signs for various kinds of conditions, including skin cancer and breast cancer, dementia, and signs of heart disease.

- Most anyone who regularly codes is using shortcuts. Whatever script your developing, is mostly snippets of things others have typed before. Auto-complete IDEs have been a staple for years. I think the use of a generative AI junior coder is acceptable when paired with humans who understand what it's doing (this is key!). Copilot and other such tools aren't cribbing from creators in the way that say... stealing a novelist's words and style and letting randos try to mimic it on autopilot to pump out titles on Amazon would be.

Note: You still need to know how to code to use a bot as a coding assistant.

The LLM chatbots can't come up with new ideas, but they do recycle old ones in interesting ways, that can either be assistive or dangerous. There are things that we can autopilot (malware detection, OCR image filtering to help your doctor find signs of heart conditions, talking to fraudsters, etc.) and others we may want to keep in manual control (writing stories, making art, etc.). Who gets to make those choices? In Diego Rivera's mural, different social forces are seen competing for influence over technological developments of the time. That painting is still useful today, even if the original was trashed, as a reminder that the crossroads of innovation are never completely technical. They're political, economic, moral, ethical, and social. The algorithm is just people, all the way down. All these generative AIs should be open because their training data comes from us, and their models are based on us. They belong to us.

Good night, and good luck.

Edward R. Murrow was talking about TV, but it still works for nearly every technology.